Alternative Computing Technologies (ACT) Lab

Computer Science and Engineering

University of California, San Diego

DnnWeaver v2.0 is out!

Look for our demo at HotChips'18

Flint Center for the Performing Arts, Cupertino, California

Sunday-Tuesday, August 19-21, 2018

Source code for real-time objection recognition using DnnWeaver v2.0 available here:

Short Introduction

Long Introduction

Please checkout DnnWeaver v1.0 below

DnnWeaver v1.0

DnnWeaver is the first open-source framework for accelerating Deep Neural Networks (DNNs) on FPGAs. While FPGAs are an attractive choice for accelerating DNNs, programming an FPGA is difficult. With DnnWeaver, our aim is to bridge the semantic gap between the high-level specifications of DNN models used by programmers and FPGA acceleration. With our framework, the programmer only specifies the Deep Neural Network using Caffe format. The framework automatically generates the accelerator Verilog code specialized for the given network, using our hand-optimized Verilog templates. DnnWeaver is under development at the Alternative Computing Technologies (ACT) Laboratory, University of California, San Diego. Below, you can download our framework and the Verilog code for our template architecture.Citation

If you use this work, kindly cite our paper (PDF, Bibtex) published in The 49th Annual IEEE/ACM International Symposium on Microarchitecture (MICRO), 2016.H. Sharma, J. Park, D. Mahajan, E. Amaro, J. K. Kim, C. Shao, A. Mishra, H. Esmaeilzadeh, "From High-Level Deep Neural Models to FPGAs", in the Proceedings of the 49th Annual IEEE/ACM International Symposium on Microarchitecture (MICRO), 2016.Workflow

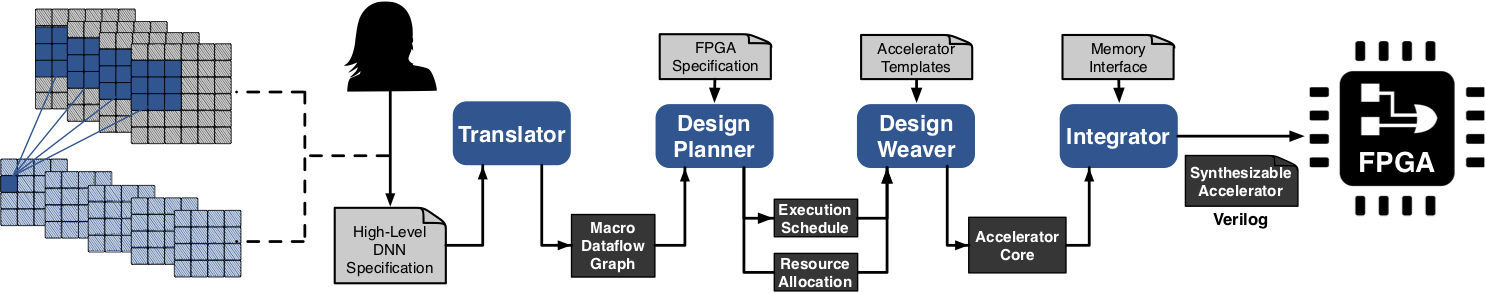

DnnWeaver consists of four components:

Translator:

The Translator component of DnnWeaver creates a macro-dataflow graph of the DNN model using our Instruction Set Architecture (ISA). Each node of the macro-dataflow graph represents a layer of DNN model. DnnWeaver then generates a static execution schedule for the accelerator using the macro-dataflow graph.

Design Planner:

The Design Planner optimizes the following parameters to specialize the accelerator for the given DNN model:

- The organization of the accelerator to maximize performance.

- The slicing of the data to minimize off-chip memory accesses.

Design Weaver:

The Design Weaver generates the accelerator core using our hand-optimized Verilog templates according to the Design Planner.

Integrator:

The Integrator is the final component of DnnWeaver and appends the memory interface code to the accelerator. As a part of the initial release, DnnWeaver includes the memory interface code for Xilinx Zynq ZC7020 board.

Benchmark List

DnnWeaver supports a wide range of Convolutional Neural Networks. The following are the benchmark models we used for the experimental results in our paper:

Benchmark Deep Neural Network (DNN) Models |

|||||||||

|---|---|---|---|---|---|---|---|---|---|

| # | Benchmark Name | Data Set | Description | Number of Layers | Model Size (MegaBytes) | Number of Operations | Lines of Code (prototxt) | ||

| 1 | LeNet | MNIST | Hand-written Digit Recognition | 7 | 0.8 MB | 2 GOps | 128 | ||

| 2 | Cifar-10 Full | Cifar-10 | Object Recognition | 12 | 0.2 MB | 12 GOps | 156 | ||

| 3 | Network-in-Network (NiN) | ILSVRC 2012 | Object Detection and Classification | 28 | 14.5 MB | 1106 GOps | 516 | ||

| 4 | Djinn-ASR | Djinn and Tonic | Speech-to-text Decoder | 13 | 48.4 MB | 25 GOps | 105 | ||

| 5 | AlexNet | ILSVRC 2012 | Object Detection and Classification | 20 | 119.0 MB | 1147 GOps | 278 | ||

| 6 | VGG-CNN-S | ILSVRC 2012 | Object Detection and Classification | 19 | 196.0 MB | 2666 GOps | 200 | ||

| 7 | Overfeat | ILSVRC 2012 | Object Detection and Classification | 16 | 278.0 MB | 2798 GOps | 196 | ||

| 8 | VGG-16 | ILSVRC 2012 | Object Detection and Classification | 36 | 324.0 MB | 16362 GOps | 347 | ||

License

Copyright 2017 Hadi EsmaeilzadehLicensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License.

Developers

Hardik Sharma, Jongse Park, Divya Mahajan, Emmanuel Amaro, Joon Kyung Kim, and Hadi EsmaeilzadehMaintained by

Hardik Sharma

Patrons